Braintrust

"If you think that your customers are using AI search or AI tools like ChatGPT, Claude, or Claude Code, you should be investing there. If you don't do it right now, you'll fall behind everyone."

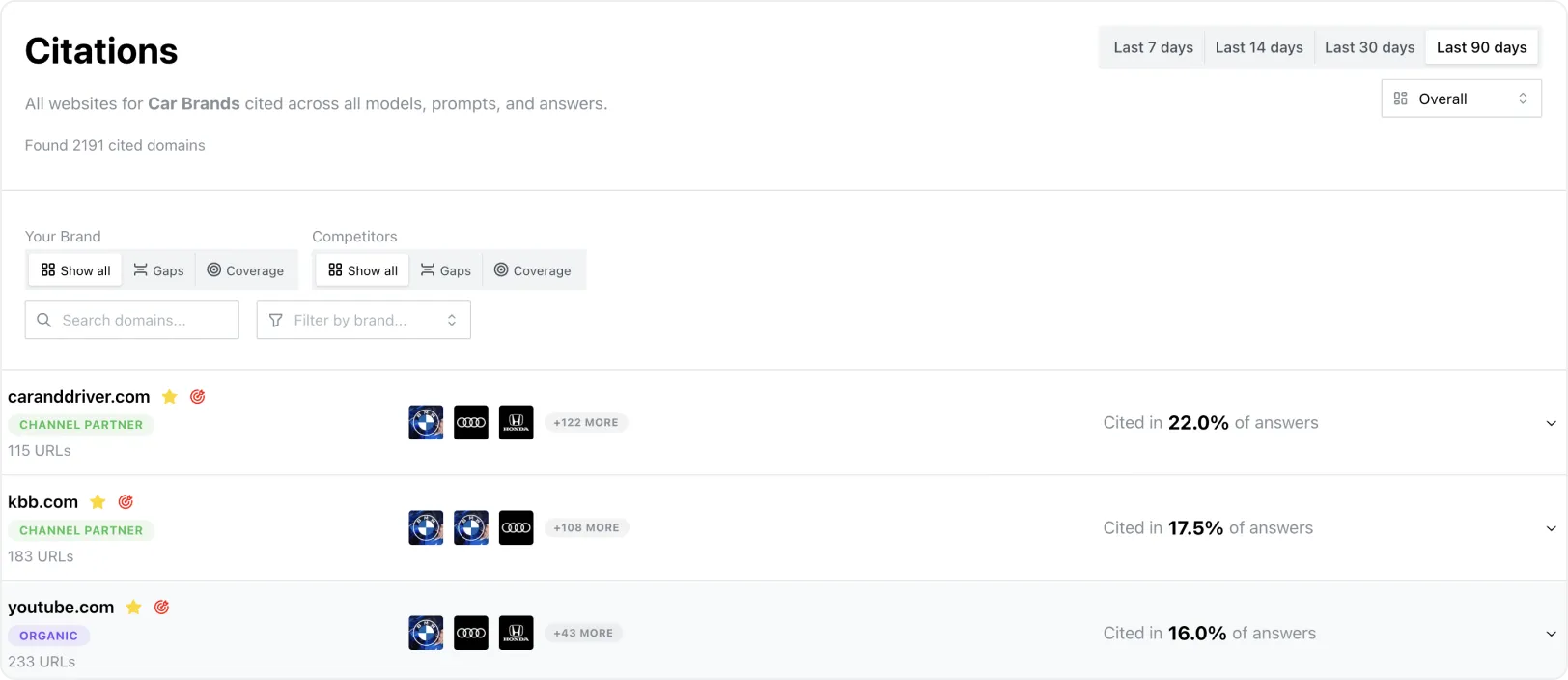

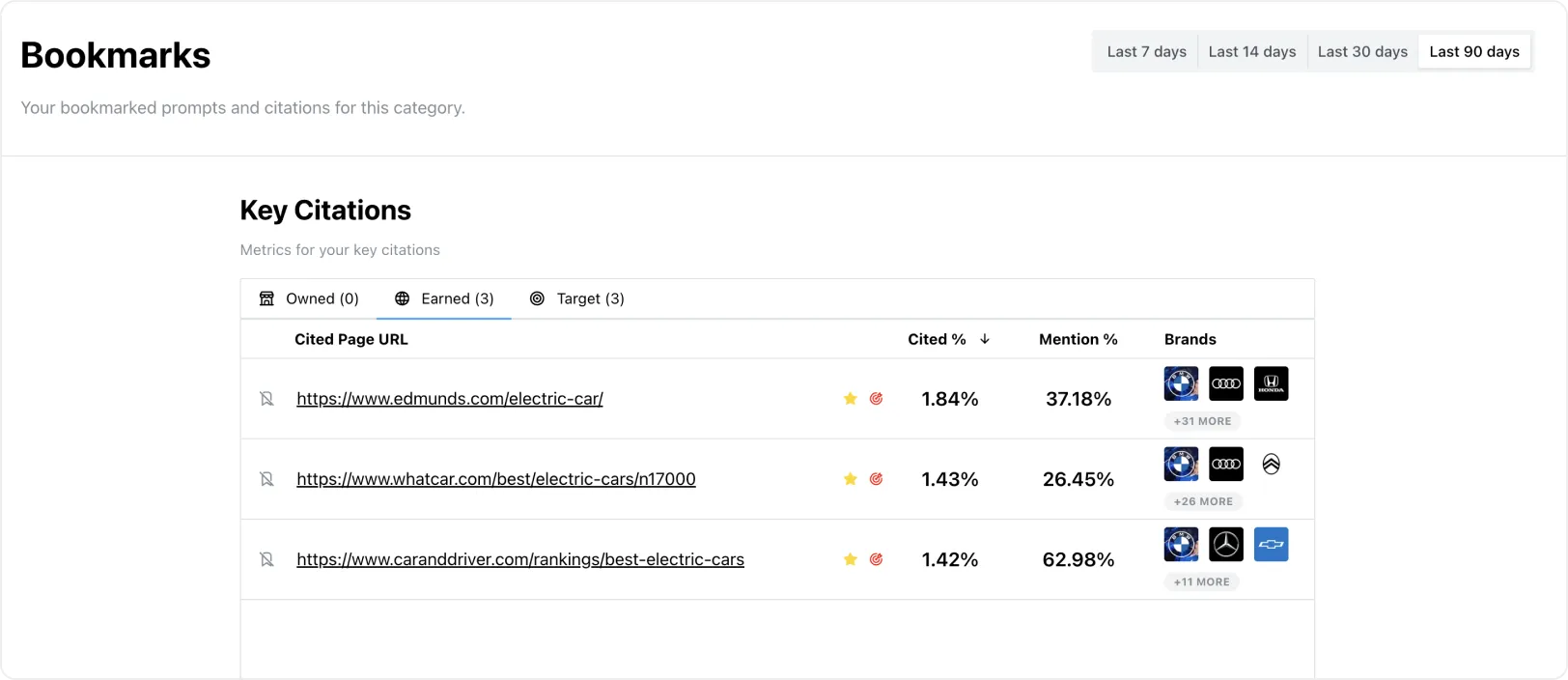

LLMO (Large Language Model Optimization) helps you understand these patterns by showing you exactly which content gets cited when you track them systematically. Gauge tracks LLM citation decisions daily, so you can see why some content consistently gets chosen while similar content gets ignored.

For example, when someone asks ChatGPT "What are the best customer service platforms?", you'll see which sources actually get cited and how often your brand appears compared to competitors.

This isn't about content quality. Your content might be comprehensive, well-researched, and authoritative. But if LLMs don't consistently choose it for citations, you're invisible to the prospects who matter most.

The brands that get cited consistently capture mind share, while others remain unknown to prospects using AI for research.

The problem isn't random. LLMs follow consistent reference patterns that become clear when you track them systematically. Your competitors are figuring out these patterns while you stay invisible in the responses that matter.

You might be wondering: how do LLMs actually decide which sources to cite, and why are some brands consistently chosen while others aren't?

The stakes are clear: in a world where AI platforms control discovery, reference intelligence determines who gets found and who stays invisible.

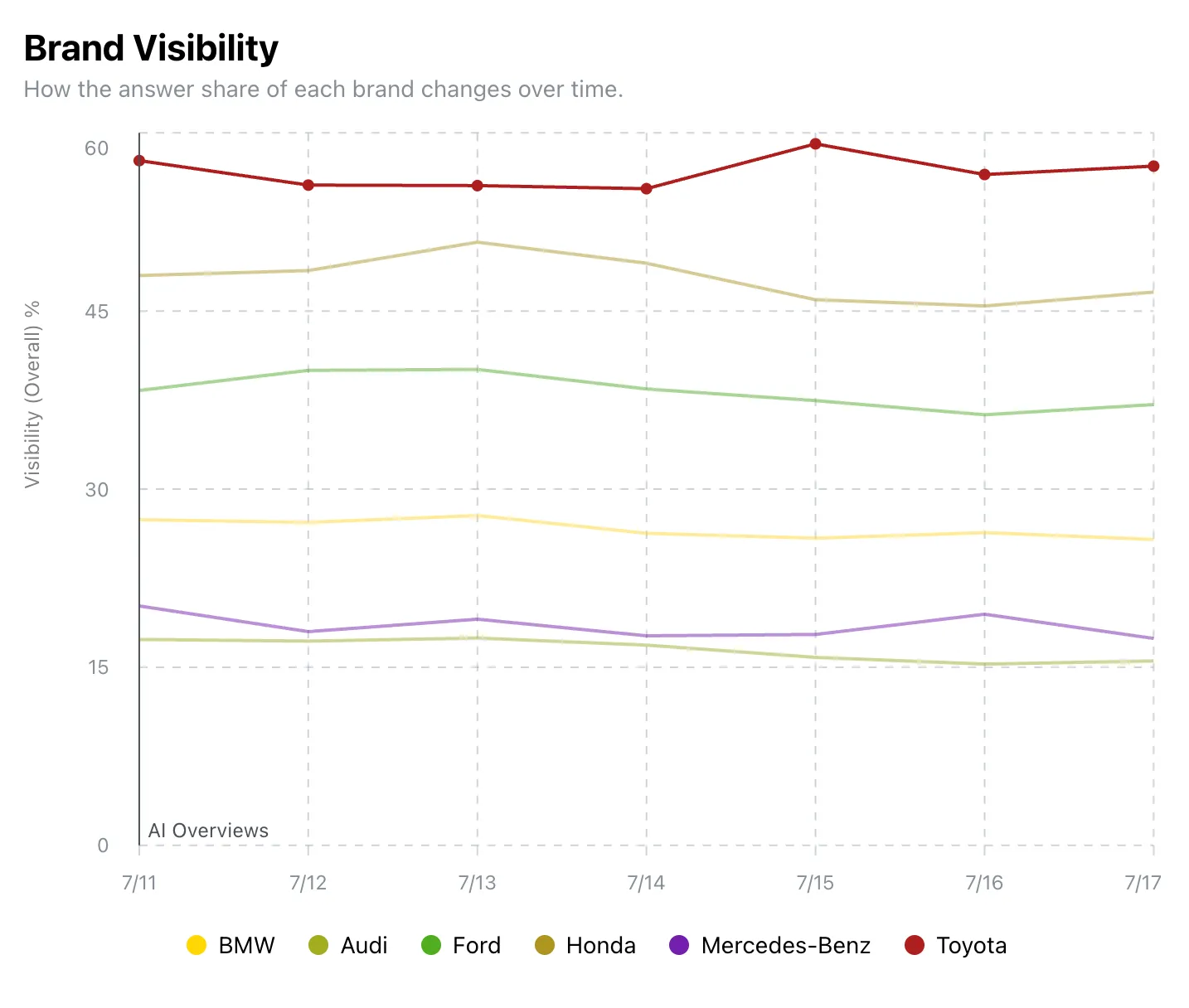

Each LLM platform shows different mention preferences. ChatGPT consistently cites certain source types, while Gemini prefers different content characteristics. Perplexity and Google AI Overviews each follow their own reference tendencies.

Understanding these platform differences explains why you might get cited on one platform but completely ignored on others, even for identical queries about your expertise.

According to IIT/Princeton research on generative engine optimization, optimization strategies can boost visibility by up to 40% in AI-generated responses, demonstrating the measurable impact of understanding these citation patterns.

This raises the question: what are most teams missing that keeps them invisible in the AI discovery game?

The teams that understand mention patterns have a massive advantage. They know which content actually gets chosen while others keep optimizing for metrics that don't drive AI discovery.

The next logical question is: how do you actually see these hidden citation patterns and use them to improve your visibility?

For instance, when we track "Best marketing automation platforms," you'll see which sources each LLM consistently cites, how often your brand appears compared to competitors, and which opportunities you're missing.

What Our Intelligence Reveals:

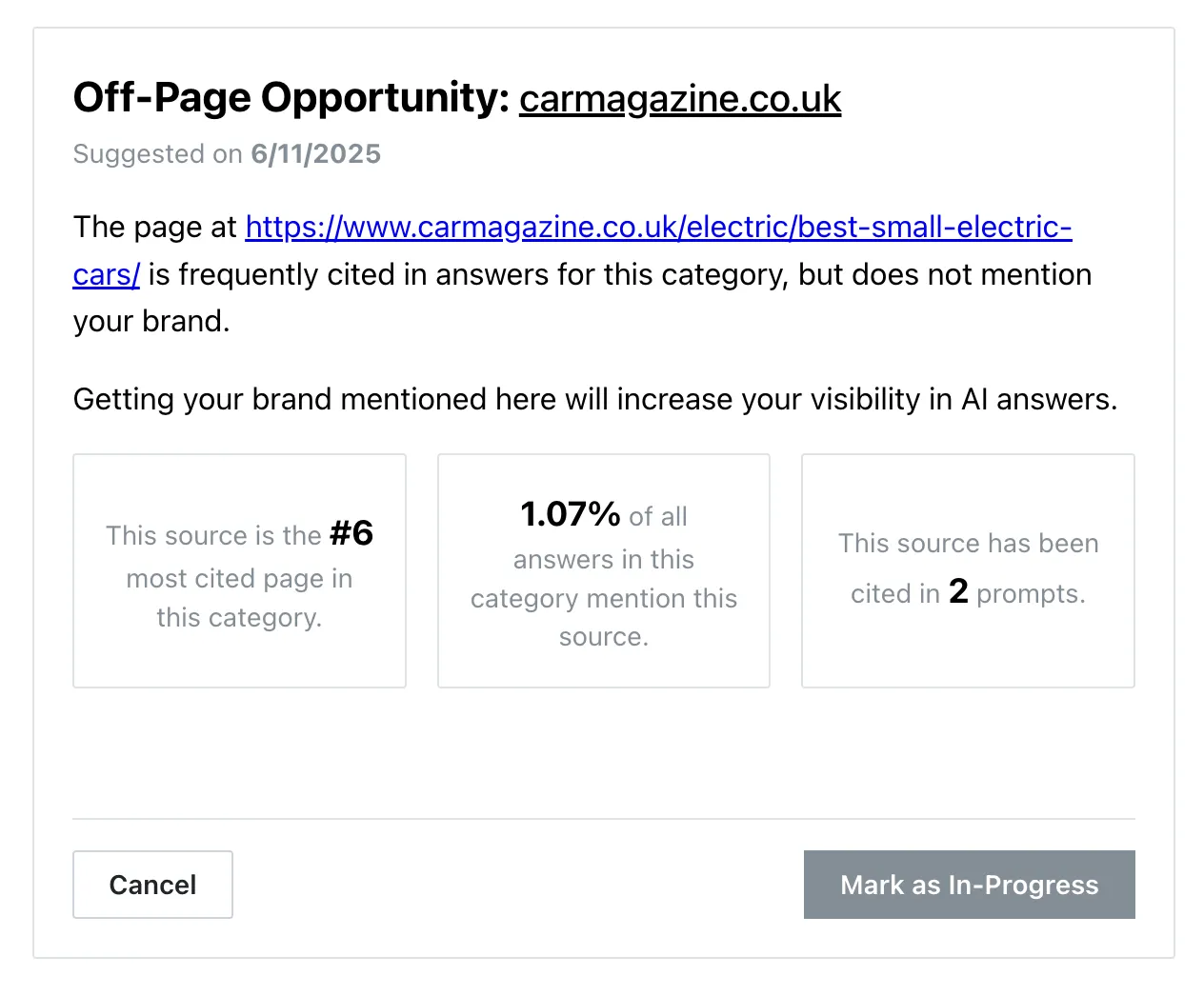

Our Action Center analyzes mention data to show you specific opportunities where you could improve your visibility frequency. Instead of generic advice, you'll see exactly which gaps to target and which high-frequency sources to pursue.

You'll see content gaps where competitors consistently get mentioned, high-value sources that get referenced frequently in your industry, and specific opportunities to increase your visibility based on actual LLM behavior.

Monitor how changes affect your mention frequency across LLM platforms. When you create new content or get featured on high-visibility sources, you'll see exactly how it impacts your appearance rates on ChatGPT, Gemini, Perplexity, and Google AI Overviews.

One customer used our data to understand which sources get referenced most in their industry and focused on getting featured in those publications. They went from appearing in 22% of relevant responses to 42% in just four days.

Another team analyzed our intelligence to identify the content gaps where competitors dominated mentions and tripled their visibility by targeting those specific opportunities.

Teams with source intelligence see faster results because they focus on what actually drives mentions instead of guessing about LLM preferences.

You'll stop guessing about LLM citations and start making decisions based on actual citation patterns that drive discovery.

LLMO shows you which content actually gets cited when LLMs generate responses in your industry. For example, when someone asks ChatGPT "Best HR platforms," you'll see which sources get mentioned, how often your brand appears, and how your citation frequency compares to competitors across different platforms.

Regular SEO tracks website rankings and traffic. Citation intelligence monitors which content actually gets mentioned in LLM responses. You'll see real citation data rather than assumptions about what might work, helping you understand why some content consistently gets cited while similar content doesn't.

Start by understanding exactly where and why competitors get cited more often. Look at which prompts consistently favor them, analyze the sources they get featured in, and identify the content characteristics that make them citation-worthy. Focus on closing the biggest gaps first rather than trying to compete everywhere at once.

Absolutely. Many teams see significant improvements by optimizing existing content and pursuing strategic citation opportunities. You can update current content to better match what gets cited, target high-value sources for placement, and focus on the platforms where you have the best chance of gaining ground quickly.

Focus on your biggest competitive gaps and highest-impact opportunities:

Citation frequency depends on source reputation within your industry, content comprehensiveness and expertise demonstration, publication credibility, and platform-specific preferences that vary between AI systems. Understanding these factors through tracking data helps explain why some content gets cited consistently while similar content doesn't.

Focus on ChatGPT, Gemini, Perplexity, and Google AI Overviews since they have the largest user bases. Each platform shows different preferences, so comprehensive intelligence means understanding which sources each platform favors rather than assuming they all work the same way.

Teams typically see improvements within days of focusing on proven opportunities. One customer increased mention rates from 22% to 42% in four days after understanding the patterns we identified. Success comes from targeting specific gaps rather than creating content without direction.

Yes, because tracking focuses on actual AI platform behavior rather than assumptions about what works. According to Accenture research, 76% of executives believe AI will significantly impact customer discovery. Understanding mention patterns helps improve visibility regardless of your industry.

Track mention frequency across prompts in your industry, visibility rates compared to competitors over time, performance improvements across different AI platforms, and citation trends that show whether you're gaining ground. Gauge provides these metrics so you can see which efforts actually improve your AI visibility.

Book a demo to see how this analysis works in practice. We'll show you the methodology behind understanding mention patterns and explain how successful teams use this intelligence to improve their AI visibility. This gives you the foundation to make decisions based on actual data rather than guesswork.