Full Guide to Tracking and Fixing AI Brand Sentiment

Most people think that tracking their brand in AI is just about showing up in the answer. The second half of the equation is understanding HOW you show up in the answer. Does AI mention your brand in a positive light? Or could it be spreading misinformation about your brand?

These details in the AI's responses can have impactful effects on how people perceive your band. The article walks through how marketing teams can analyze their brand's sentiment in AI, fix it through targeted content changes, and measure whether those adjustments are actually working.

What Is AI Brand Sentiment?

AI brand sentiment is the tone, positioning language, and contextual framing that large language models use when describing your brand in generated responses. It differs from traditional social listening or review monitoring because you're not measuring what customers say about you on public platforms. You're measuring what AI says about you to potential buyers when they ask evaluation-stage questions.

Traditional sentiment tools scrape reviews, social posts, and forums. AI brand sentiment analysis looks at the actual output text of ChatGPT, Perplexity, Gemini, Claude, and other models when prompted with queries relevant to your category. The data source is the AI answer itself. In other words, if you control what content is being cited by AI, you can control how your brand is mentioned.

How It Differs from Visibility

Visibility answers a binary question: does your brand appear in the AI response? Sentiment answers a qualitative one: when your brand does appear, how is it described? These are separate problems requiring separate tracking approaches.

A brand with 40% visibility and weak sentiment is in a different position than a brand with 20% visibility and strong sentiment. You can have high visibility and still lose the framing battle.

Positive, Negative, and Neutral, and Why Tone Isn't Enough

The basic sentiment taxonomy (positive, negative, neutral) is a starting point, not the full picture:

- "Brand X is affordable for smaller teams" scores as positive. But it signals the product can't scale. A mid-market buyer moves on.

- "Brand Y is a good starting point for companies new to GEO" is positive too. But "starting point" tells experienced buyers to keep looking.

Tone is fine in both cases. The framing is the problem.

Sentiment Themes

Sentiment themes are the specific brand attributes that AI models evaluate and describe separately. Pricing, customer support quality, usability, onboarding experience, integration depth, and security posture are all distinct themes that models address independently in their responses.

A model might describe your product positively on usability but negatively on pricing in the same response. Tracking sentiment at the theme level reveals which specific attributes need attention, rather than reducing everything to a single score.

Why AI Brand Sentiment Matters for B2B SaaS

Evaluation-stage queries are where sentiment has the most purchase-decision impact. When a buyer asks "Is [your brand] worth the price?" or "How does [your brand] compare to [competitor] for enterprise teams?", the model's framing directly shapes perception before that buyer ever talks to your sales team.

Models also become more likely to recommend your brand in broader, high-volume category queries because the stronger attribute feeds into the model's overall understanding of your brand.

The Brand Stripping Problem

Your content can be the source behind an AI answer while your brand name is completely absent from the response. Citation rate (how often your URLs appear as sources) and mention rate (how often your brand name appears in the answer text) are two different metrics, and the gap between them reveals a problem called brand stripping.

A brand with a 30% citation rate and a 5% mention rate has content that shapes answers but gets no credit in front of the buyer. The model uses your research, your feature descriptions, your comparisons, then attributes the synthesized insight to no one. Fixing brand stripping requires content that makes your brand name inseparable from the data it contains.

Sentiment Affects Visibility, Not Just Reputation

Negative or weak framing in evaluation prompts doesn't stay contained to those prompts. When models develop a weak framing pattern for your brand on specific attributes, that pattern leaks into broader recommendation queries. A brand consistently described as having a "steep learning curve" will appear less frequently in "best tools for [category]" responses, even when the query doesn't mention onboarding.

Why Different Models Describe the Same Brand Differently

ChatGPT might describe your brand as "a strong option for mid-market teams" while Perplexity calls you "primarily suited for startups." The divergence comes from a two-layer dynamic.

The first layer is training data. Each model was trained on a different corpus at a different cutoff date, creating different baseline "knowledge" about your brand. Changing training data takes time; you're waiting for the next training cycle. The second layer is live retrieval. Models like Perplexity and ChatGPT with search pull current web content in real time, meaning content changes can shift framing in weeks rather than months.

Analyzing Brand Sentiment Data

Benchmark Your Current Sentiment

Before optimizing anything, establish a baseline. Run your brand through AI models across category-relevant prompts and document how each model describes you today. Without a starting point, you can't measure whether changes are working.

Record sentiment at the theme level (pricing, usability, support, etc.) and by model. The goal is a clear map of your current framing landscape, not a single score.

Identify Your Sentiment Themes

Map which specific brand attributes AI is evaluating for your brand. Pricing sentiment, support sentiment, and onboarding sentiment each require different content fixes, so lumping them together obscures the real priorities.

Prioritize themes where negative framing has the highest purchase-decision impact. A negative pricing frame on a brand that sells to cost-sensitive buyers is more urgent than a negative sentiment on a feature most buyers don't care about.

Run Branded and Non-Branded Prompts

Non-branded prompts ("What's the best GEO platform for reporting and monitoring?") measure visibility. Branded prompts ("How does Gauge help companies track AI visibility?") reveal how AI describes your brand when a buyer is evaluating you directly. These two prompt types answer different questions and produce different data.

In Gauge, branded prompts are toggled separately because visibility is always near 100% (the model will talk about you when you're named in the prompt). Gauge excludes branded prompts from ranking and citation metrics by default to avoid inflating organic visibility scores, treating them instead as a dedicated sentiment analysis surface.

Read Sentiment by Model

Pull direct quotes from each model's responses. When ChatGPT says Gauge is "best for data-driven teams" and another model calls the $99 plan "potentially expensive," those are two different signals requiring two different responses. Aggregate sentiment scores hide model-level discrepancies that matter for targeted content fixes.

Gauge surfaces specific model quotes alongside sentiment analysis, making it possible to see exactly which model is framing your brand weakly and what language it's using. That specificity is what turns monitoring into actionable intelligence.

Compare Against Competitors

Sentiment data becomes strategic when you compare it against how models describe your competitors. If a competitor is consistently framed as "the leader in enterprise onboarding" while your brand is described as "also suitable for enterprise use," that gap identifies exactly where your content needs to make a stronger claim with better evidence.

How to Improve Brand Sentiment in AI (Step by Step)

Address Negative Themes Directly

When AI consistently frames a brand attribute negatively, the fix is evidence-based content that directly addresses the specific claim. If models say your product has a "steep learning curve," publish onboarding time data, customer success stories with specific timelines, and comparison benchmarks. The appropriate action is to argue against that in your content rather than deny it.

Fixing the pricing perception for Gauge's $99 plan, for example, would require content that contextualizes the price against competitor pricing, total cost of ownership, and value delivered. That single fix would ripple into broader category queries.

Build Semantic Consistency Across Owned Properties

Every owned surface should use the same core positioning language. Homepage, product pages, blog posts, LinkedIn, press releases, schema markup. If the language contradicts itself across those pages, models can't confidently assign your brand a clear identity and default to vague descriptions.

Publish First-Party Data and Proprietary Research

Original data, named benchmarks, and branded research create what you might call "brand-linked facts." When a model cites a statistic from "Gauge's research," it has to name Gauge. Content with proprietary data is structurally harder to strip because the brand name is embedded in the fact itself.

Case studies with specific, time-bound outcomes work the same way. "Vellum went from 1.4% to 40.3% AI visibility in seven months using Gauge" is a citable fact that requires brand attribution. Generic claims like "our customers see improved visibility" are easy for models to anonymize.

Update Outdated Content

Stale pricing pages, deprecated feature descriptions, and outdated comparison content are common sources of negative AI framing. If your pricing changed six months ago but your old pricing page still ranks, models may pull the outdated information and present it as current.

Audit content where AI is likely pulling information: pricing pages, feature comparison pages, integration documentation, and help center articles. A systematic content refresh eliminates the source material feeding inaccurate framing.

Use Structured Data

Schema markup helps models extract and attribute brand information accurately. When your structured data clearly defines your product category, pricing model, feature set, and company entity, models have cleaner signals to work with during synthesis.

Clear H2/H3 structure, explicit positioning statements, and FAQ sections with direct answers all contribute to what models can cleanly extract. LLMs synthesize from the clearest signal available, so structured content wins over buried positioning in long-form paragraphs.

Track Whether the Changes Are Working

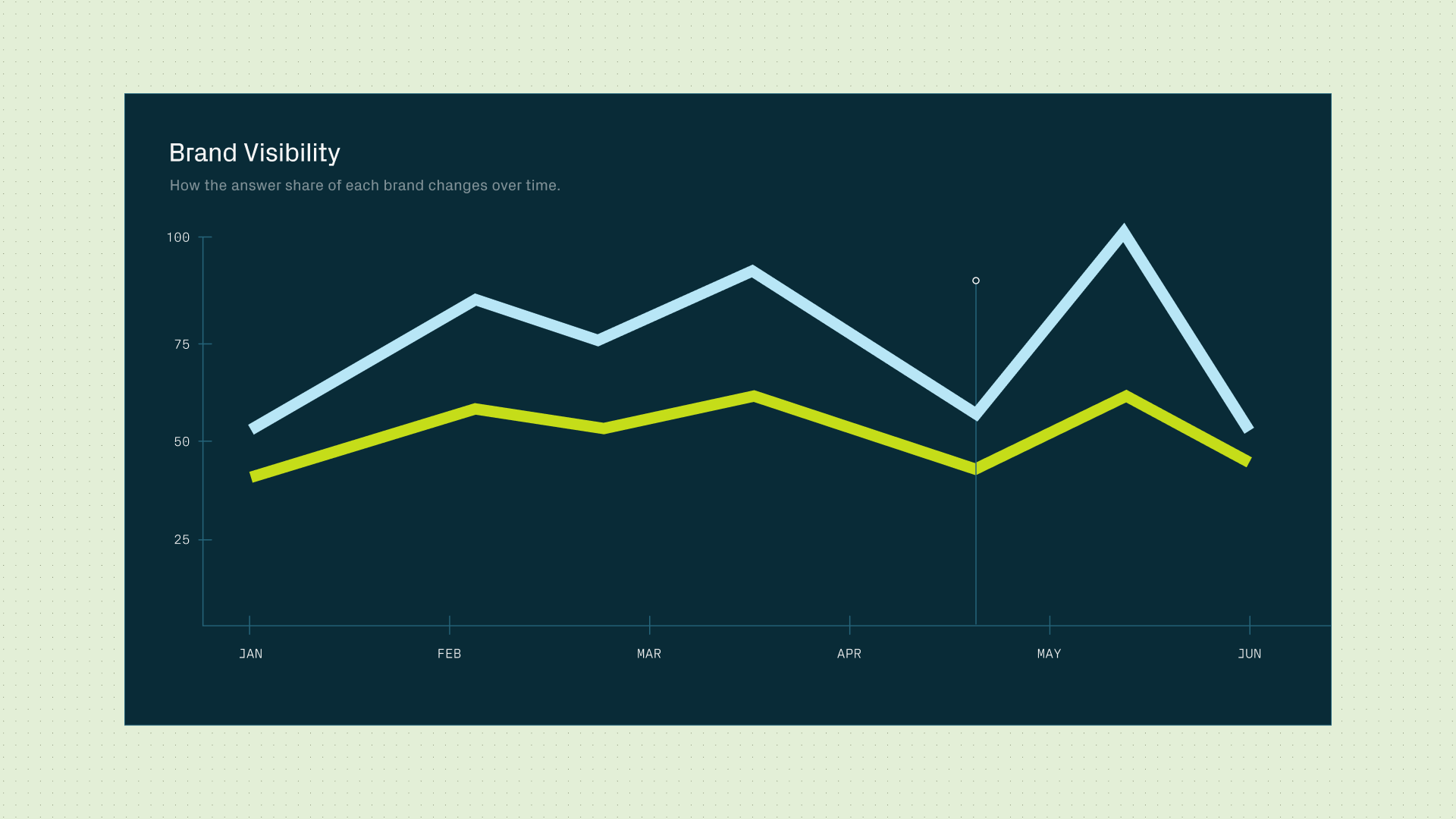

Four core metrics tell you if your content changes are shifting AI framing: visibility rate, citation rate, mention rate, and sentiment score. Track all four because they can move independently. Your visibility might increase while your sentiment stays flat, or your citation rate might climb while your mention rate (brand stripping) remains low.

Models with live retrieval (Perplexity, ChatGPT with search) typically reflect content changes within 2 to 8 weeks. Models relying primarily on training data take longer. Tracking by model lets you see which models have picked up your changes and which still reflect older framing.

How Gauge Helps Brands Track and Improve AI Brand Sentiment

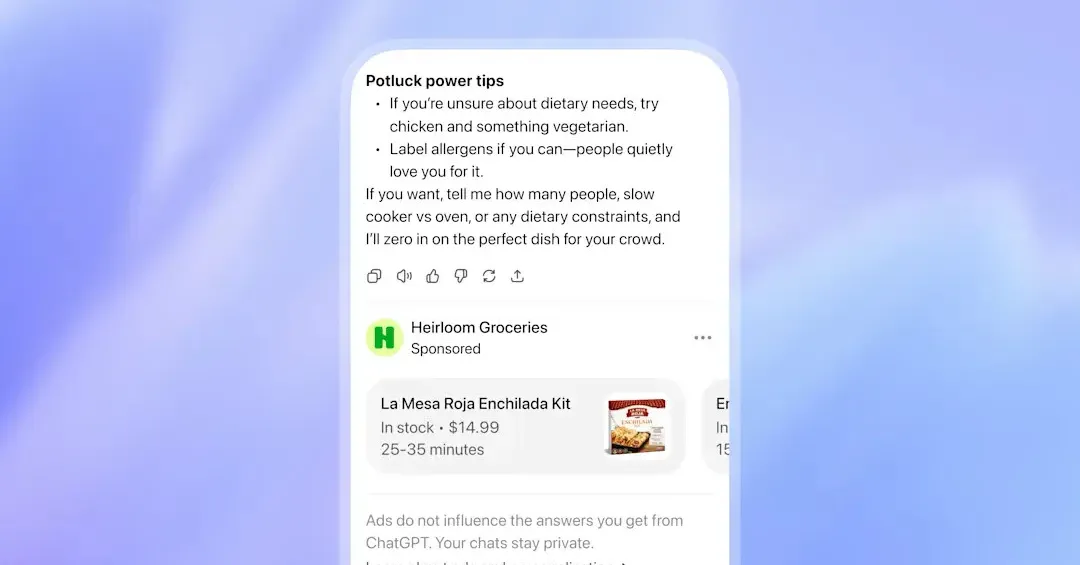

Gauge tracks AI brand sentiment across every prompt and model in your account. The sentiment analysis pulls from the full dataset of answers generated daily across ChatGPT, Perplexity, Gemini, Grok, and Copilot, surfacing the specific language models use to describe your brand with direct quotes from responses.

The platform separates branded and non-branded prompts. Non-branded prompts measure organic visibility. Branded prompts reveal how AI describes you when a buyer is actively evaluating your product. Gauge excludes branded prompts from ranking and citation metrics by default and treats them as a dedicated sentiment surface instead.

Sentiment data is broken down by model and theme. If ChatGPT frames your product as "best for data-driven teams" while another model calls your pricing "potentially expensive," those are two different problems requiring two different content fixes.

Most tools stop at surfacing the data. Gauge connects sentiment findings directly to content briefs and article generation, so teams can act on what they find and track whether it worked.

FAQ

How is AI brand sentiment different from traditional brand monitoring? Traditional tools track what people say about you on social media, reviews, and forums. AI brand sentiment tracks what LLMs say about you to buyers in generated answers. Gauge analyzes the actual output text of models like ChatGPT, Perplexity, and Gemini, surfacing the specific language and framing they use when describing your brand.

How long does it take to improve AI brand sentiment? For models with live web retrieval (Perplexity, ChatGPT with search), content changes typically shift framing within 2 to 8 weeks. Models relying on training data take longer, potentially until the next training cycle. Gauge tracks sentiment by model so you can see which models have picked up your changes and which haven't yet.

What's the difference between branded and non-branded prompts? Non-branded prompts ("best GEO tools for SaaS companies") measure visibility. Branded prompts ("How does [your brand] handle enterprise onboarding?") measure how AI describes you when a buyer is actively evaluating your product. In Gauge, the branded toggle separates these prompt types, and branded prompts are excluded from visibility rankings by default since the model will almost always mention the named brand.

Can I track AI brand sentiment without a dedicated tool? You can manually query AI models and document responses, but the process doesn't scale. You'd need to run dozens of prompts across multiple models, record the exact response text, classify sentiment by theme, and repeat the process regularly to track changes. Gauge automates this across all prompts and models in one account, with model-level quotes and trend tracking built in.

Which sentiment themes should I prioritize fixing? Start with themes that have the highest purchase-decision impact for your buyer persona. If your buyers are price-sensitive, negative pricing framing is more urgent than negative framing on a secondary feature. Gauge's sentiment analysis surfaces which themes appear most frequently in AI responses and whether they're framed positively or negatively, helping you prioritize based on actual model output rather than assumptions.

Does fixing AI brand sentiment also improve AI visibility? Yes. Peec AI's multiplier effect research shows that improving how AI perceives one brand attribute increases the likelihood of recommendation in broader category queries. Gauge tracks both sentiment and visibility, so you can measure whether sentiment fixes are translating into higher recommendation rates across non-branded prompts.

Related Blogs

.png)