How to Optimize Content for AI Citations

TLDR: AI is changing how people find answers. ChatGPT, Perplexity, and Gemini now handle billions of queries monthly, and users are increasingly getting answers directly from those platforms instead of clicking through to search results. The brands that show up in those answers share one thing: their content gets cited. Getting cited consistently comes down to how well your content is structured for AI retrieval This guide breaks down the full citation optimization process: how AI models search and select sources, what on-page and authority signals move citation rate, what technical mistakes block it, and how to track and repeat the cycle using Gauge.

How Do AI Models Search for Answers

Step 1: The User Query

The prompt a user submits to ChatGPT, Perplexity, or Gemini is the starting point of the entire retrieval chain. These prompts look nothing like traditional Google searches. According to Gauge data, the average AI-generated search query runs 11.1 words, compared to 2-3 words for a typical human Google search. Users ask full questions with context, constraints, and qualifiers baked in.

Step 2: The Search Decision

The model first evaluates whether its training data (parametric memory) can answer the question or whether it needs to retrieve live information from the web. Settled factual questions ("What year was the Eiffel Tower built?") rarely trigger retrieval. Commercial, product, and category queries almost always do, because they require current information the model wasn't trained on.

For citation optimization, the retrieval-required bucket is where the opportunity lives. When a model decides to search, your content can become a source.

Step 3: Web Search and Query Rewriting

When retrieval is triggered, the model writes its own search queries. These queries are longer, more specific, and syntactically different from what a human would type. Gauge data shows models run approximately 2.7 searches per user prompt, often in parallel. The most common words AI models insert into those queries include: "tools," "2026," "list," "comparison," "software," "best," and "platforms."

The implication is direct. Content structured as listicles, comparisons, and roundups surfaces more frequently because it matches the query language models generate. A page titled "Best Project Management Tools: 2026 Comparison" maps to these inserted terms far better than "Our Approach to Project Management."

Step 4: Retrieval and RAG Chunking

After running its searches, the model receives standard search results, selects pages to read, and breaks them into chunks of roughly 200 to 1,000 tokens each (Seattle Organic SEO / Princeton GEO research). Each chunk is evaluated independently as a potential source. A single page might produce 10-15 chunks, and the model scores each one on relevance to the specific sub-question it's answering.

Where you place the answer within a section, how self-contained each section is, and whether the model can extract a clean passage without needing surrounding context all determine whether your content survives this step. AI models scan 50-60 results per query (Gauge data), compared to the top 3 that human searchers typically review. Ranking #1 is less important than being structured for extraction.

Step 5: Answer Generation and Citation Assignment

The model synthesizes the highest-scoring chunks into a final answer and assigns citations to the source URLs. Only selected passages become citations. Princeton researchers tested nine optimization tactics across 10,000 queries and found that content structure alone moves citation rate significantly, independent of domain authority or backlink count.

How to Optimize and Structure Content for Citations

With the retrieval pipeline as context, every optimization tactic below maps to a specific step in that chain.

Use Answer Capsules and Front-Loaded Answers

Each H2 or H3 section should open with a direct, 40-60 word answer to the question implied by the heading. Think of it as the extractable capsule: the passage a model can pull cleanly as a citation without needing anything before or after it. Burying the answer in paragraph three of a section means the model's chunk might not contain it at all.

Avoid pronoun-heavy writing that requires context from earlier sections. Repeat key entity names within each section so every chunk is self-contained.

Match Heading Structure to AI Query Patterns

Descriptive, question-format headings ("How does X work?" or "What is the best Y for Z?") serve double duty. They act as chunk boundaries, telling the model where one topic ends and another begins. They also map directly to the sub-queries models generate during Step 3.

Clever or vague headings ("The Big Picture" or "Why It All Adds Up") waste the single strongest structural signal you have.

Prioritize Listicles, Tables, and FAQ Sections

Lists and tables are inherently extractable because they present information in discrete, scannable units that map cleanly to model-generated sub-queries.

FAQ sections are equally valuable. Each question-and-answer pair functions as a standalone chunk that mirrors how AI models decompose user prompts into retrievable sub-questions.

Include Original Data, Statistics, and Citation Blocks

Specific data points and proprietary research are uniquely extractable because competing pages can't replicate them. A sentence like "Pages updated within the last 2 months earn 28% more citations" is a clean citation block: short, factual, self-contained. A paragraph of vague analysis about "the importance of freshness" loses to that specificity every time.

If you have proprietary data, surface it early and clearly. Models are selecting for the most concrete, attributable answer to each sub-query.

Refresh Content Regularly

Pages updated within the last two months earn 28% more citations than stale content. Perplexity runs real-time RAG on every query, which means its recency bias is even stronger than other platforms.

At minimum, key pages should be updated within the current year. Add a visible "Last Updated" date, refresh statistics, swap in current examples, and add new sections when the topic evolves.

Align Title and Slug to AI Query Language

AI models generate their own search queries before retrieving content, and those queries average 11.1 words with terms like "best," "list," and "comparison" baked in. If your title and slug don't match that language, your page won't surface regardless of how well the content is structured. Treat your title and slug as retrieval signals, not branding decisions.

Schema won't save poorly written content, but its absence handicaps well-written content unnecessarily.

How to Track Your Citations

Without measurement, you can't prioritize. You also can't prove that the work is producing results. Set up your tracking layer before you start optimizing.

Pull Domain and URL-Level Citation Rate Baselines

Gauge's Citation Analysis provides citation rate at the domain and URL level, with filters by topic, model, and date range. Sort by citation rate change to identify your biggest movers, both positive and negative. Gauge's Health Score runs a nightly audit against nine criteria for AI and traditional search optimization, giving you an automated version of the structural checklist above.

The Ask Gauge agent unifies GA4, GSC, and GEO data into a single analysis layer, so you can correlate citation rate shifts with traffic and conversion changes without toggling between dashboards.

Segment Pages into Four Tiers

Once you have baseline data, categorize every page in your priority topic areas:

Tier 1 (Protect): High citation rate. These pages are working. Don't restructure them; monitor for decay.

Tier 2 (Fix): Declining citation rate. Typically a freshness or structural issue. These are your highest-ROI optimization targets because they were cited before and can be again.

Tier 3 (Optimize): Zero citation rate, high intent. The page exists and covers the topic, but models aren't selecting it. Structural fixes (answer capsules, heading improvements, data density) are the primary lever.

Tier 4 (Create): No page exists for a topic where competitors are being cited. Net new content, ideally using Gauge's Content Engine to generate briefs and articles informed by the structural patterns of already-cited content in the category.

Use the Citation Rate vs. Mention Rate Diagnostic

Gauge tracks two distinct metrics that, together, form a diagnostic no competitor offers:

High citation rate + low mention rate means AI models are using your content as a source but stripping your brand name from the generated answer. The fix is adding brand-linked examples, proprietary data points, and first-person brand perspective that make it harder for models to separate your content from your identity.

Low citation rate + high mention rate means AI knows your brand but ignores your content when building answers. The fix is improving content structure for extractability.

Low citation rate + low mention rate means both your brand and content are invisible. Net new content and authority building are the path forward.

High citation rate + high mention rate is the target state. Protect these pages and replicate the structural patterns that produced the result.

Key Takeaways

- AI models rewrite queries, run multiple searches per prompt, and evaluate 50-60 results. Ranking #1 is less important than being structured for chunk-level extraction.

- Front-loaded answer capsules, descriptive headings, semantic HTML tables, and FAQ sections are the structural signals that move pages from retrievable to cited.

- Freshness is a citation lever: refresh key pages every 8-12 weeks with current data, visible "Last Updated" dates, and new examples.

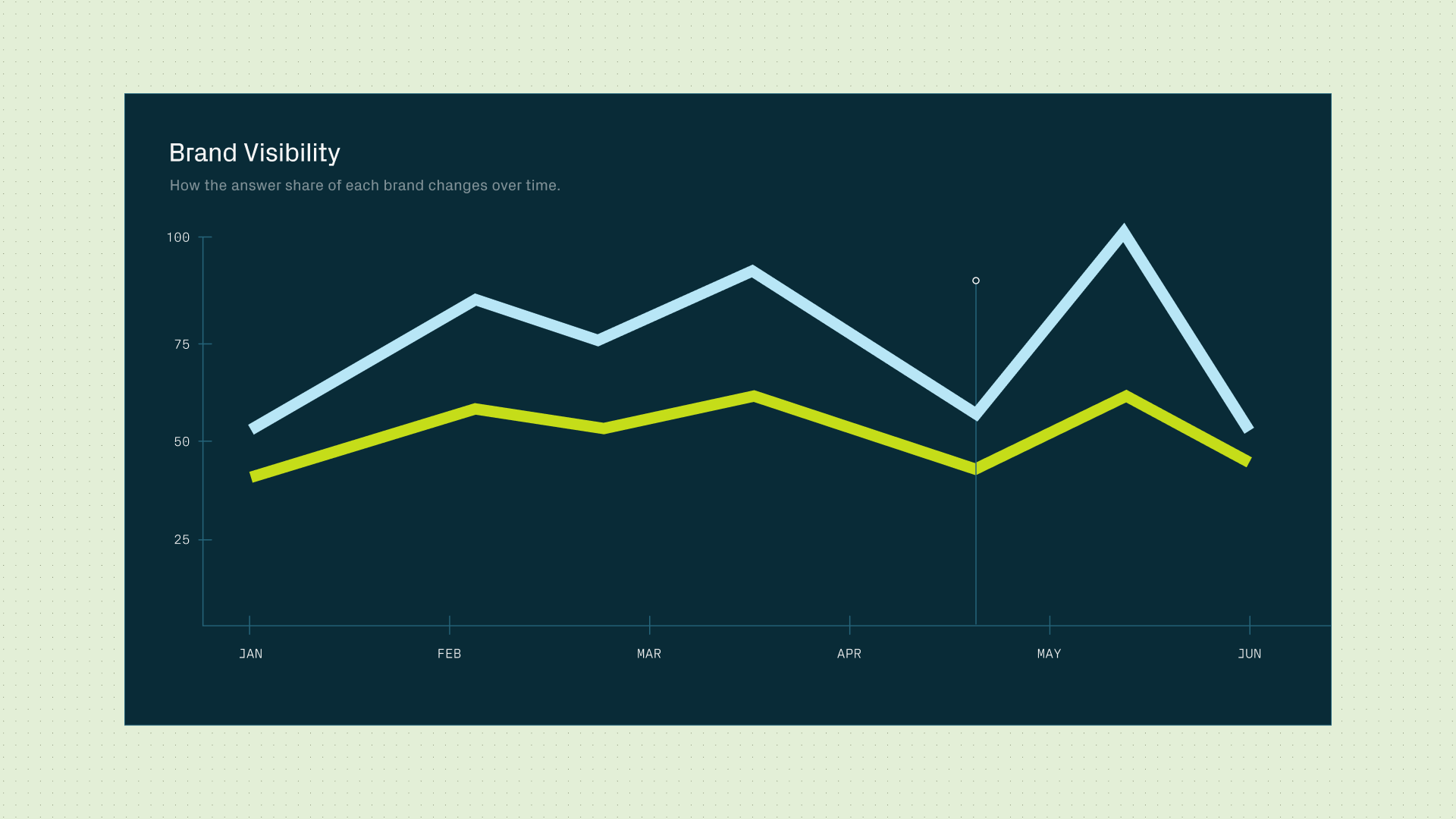

- Platform divergence is steep (only 11% of domains are cited by both ChatGPT and Perplexity). Track citation rate per model to diagnose gaps you'd otherwise miss.

- The citation rate vs. mention rate diagnostic tells you whether you have a content structure problem or a brand identity problem, and each requires a different fix.

- Gauge provides the measurement-to-execution loop: Citation Analysis for baselines, Health Score for automated audits, Actions Center for weekly priorities, and Content Engine for net new content.

FAQ

What is citation rate, and how is it different from a ranking? Citation rate measures how often AI models select your content as a source when answering queries in your topic area. Unlike rankings, which reflect position on a SERP, citation rate reflects whether your content is extracted and used in a generated answer. Gauge tracks citation rate at the domain, URL, topic, and model level.

How long does it take to see results from AI citation optimization? Structural and freshness improvements typically produce measurable citation rate changes within 2-8 weeks (Gauge data). Traditional SEO changes can take months. The faster feedback loop makes monthly optimization cadences practical.

Can a page rank well on Google but still have zero AI citations? Yes. AI models evaluate content at the chunk level, not the page level. A page that ranks well but buries answers in long paragraphs, uses vague headings, or lacks specific data points may never survive the RAG chunking step. Structure for extractability is a separate optimization from structure for rankings.

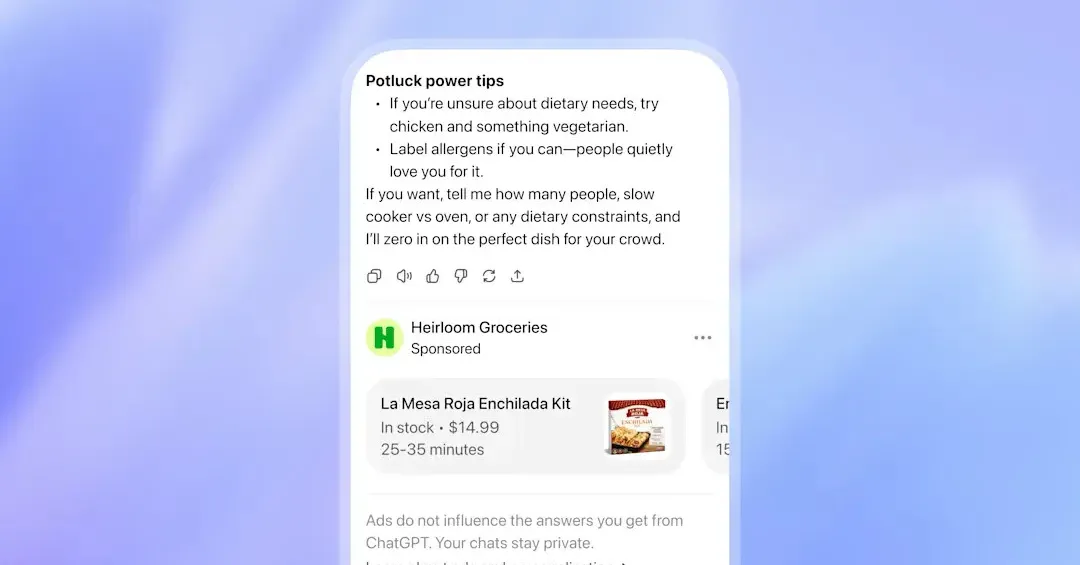

Do I need to optimize separately for each AI model? In practice, yes. ChatGPT uses Google's index and favors listicles. Perplexity runs real-time RAG and rewards recency. Gemini requires strong E-E-A-T and schema. Copilot uses Bing exclusively. The 11% overlap in cited domains between ChatGPT and Perplexity confirms that a single-platform strategy captures a fraction of AI-driven discovery.

How do I check if a specific page is being cited by AI models? Gauge's Citation Analysis shows citation data at the URL level, filtered by model. You can also manually test by running representative queries in each AI platform and checking whether your content appears in the source list. The manual approach doesn't scale, which is why automated tracking at the domain level is the standard for teams running this seriously.

What should I do when citation rate drops for a page that was previously cited? Check freshness first: has the page been updated in the last 8-12 weeks? Then check whether competitors have published newer, better-structured content on the same topic. Gauge's Actions Center flags citation rate declines and recommends specific fixes based on the page's Health Score criteria.

Does Domain Authority predict AI citation performance? Barely. DA correlates at r=0.18 with AI citation probability (Wellows, 2026), which means it explains less than 4% of the variance. E-E-A-T signals, entity presence, author credentials, and content structure are far stronger predictors.

Is schema markup worth the effort for AI citations? The data says yes. Person schema lifts Claude citation rate by 110% (Astiva AI, 1,247 brands), and FAQ/HowTo schema improves chunk-level extractability across models. Schema alone won't make weak content citable, but missing schema handicaps strong content unnecessarily.

Related Blogs